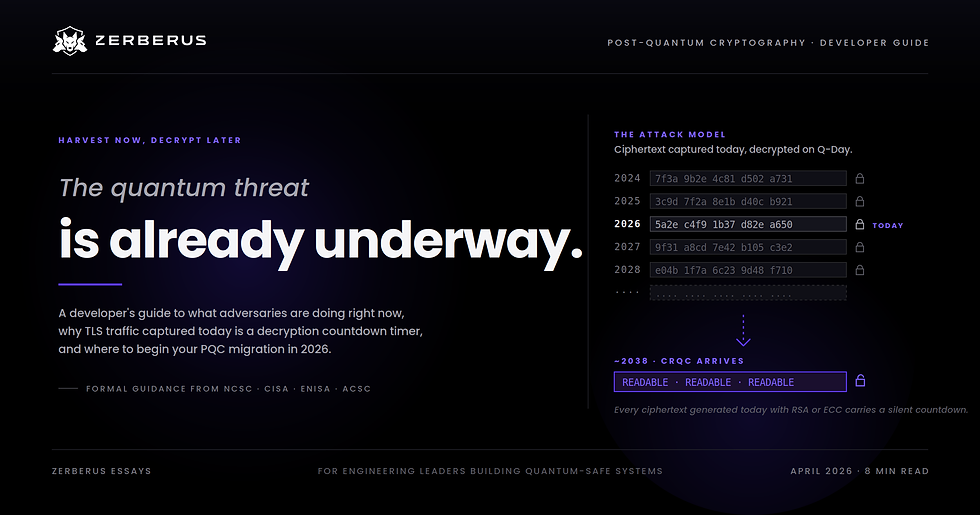

Harvest Now, Decrypt Later (HNDL): A Developer's Guide to the Quantum Threat Already Underway

- Sriram G

- Apr 21

- 8 min read

TL;DR: Harvest Now, Decrypt Later (HNDL) is the practice of capturing today's encrypted data and storing it until future quantum computers can decrypt it. Multiple government cybersecurity agencies have formally stated that HNDL is already happening. If your application protects data that must stay secret for more than a few years (tokens, TLS traffic, backups, health or financial records), you are already exposed. This guide explains what HNDL is, why it matters specifically because of quantum computing, and what developers should start doing in 2026.

What is Harvest Now, Decrypt Later?

Harvest Now, Decrypt Later, also called Store Now, Decrypt Later (SNDL) or retrospective decryption, describes a simple strategy: capture encrypted data today, store it indefinitely, and wait for the day cryptanalysis catches up. The attacker cannot read your traffic right now, but they do not need to. They only need to keep the ciphertext until the key material becomes recoverable.

This is structurally different from every other class of cyber attack. It does not require a zero-day, a misconfigured server, or a phished credential. The adversary needs only network-path access (a peering point, a fibre tap, a compromised cloud workload) and patience.

The defensive problem is uncomfortable: you cannot detect an HNDL collection, you cannot revoke ciphertext that has already left your network, and you cannot tell which of your past sessions are sitting in someone's archive waiting for quantum to mature.

Why is this specifically a quantum problem?

The public-key cryptography protecting most of the internet today (RSA, ECDSA, ECDH, Diffie-Hellman) relies on two mathematical problems that are hard for classical computers: integer factorisation and discrete logarithms. These primitives underpin TLS handshakes, SSH key exchange, JWT signatures, S/MIME, and PGP.

Peter Shor proved in 1994 that a sufficiently powerful quantum computer can solve both problems in polynomial time. On classical hardware, breaking RSA-2048 takes longer than the age of the universe. On a Cryptographically Relevant Quantum Computer (CRQC), the same key falls in hours.

The CRQC is not known to exist outside national security establishments yet, based on open-source intelligence. Credible estimates from researchers and cybersecurity agencies place its arrival somewhere between 2030 and 2040, with meaningful uncertainty in both directions. That uncertainty is the problem. Every ciphertext you generate today with RSA or ECC carries a decryption countdown timer that you cannot see and cannot stop.

Is HNDL actually happening?

It is not a theoretical concern. The UK NCSC, US CISA, European ENISA, and Australian ACSC have each published formal guidance stating that adversaries are currently collecting and storing encrypted traffic for future decryption.

The US Office of Management and Budget cited HNDL as a primary justification for the federal post-quantum migration strategy in July 2024.

Beyond agency statements, the technical community has documented many large-scale BGP hijacks over the past decade in which sections of global internet traffic were rerouted through adversary-controlled infrastructure. Organisations like the Internet Society, RIPE NCC, and MANRS publish ongoing analyses of these incidents. A single successful BGP hijack at a backbone peering point hands an adversary hours or days of raw ciphertext, more than enough for an HNDL collection campaign.

Mosca's Inequality, the one formula that tells you if you're exposed

Cryptographer Michele Mosca proposed a simple framework for reasoning about quantum risk. It is now the standard mental model for HNDL exposure:

If X + Y > Z -> you have a problem.

X = how long your data must stay confidential

Y = how long your migration to post-quantum cryptography will take

Z = how long until a CRQC existsFor a healthcare backend with 30-year patient records, a 4-year migration, and a CRQC estimate around 2038, the inequality is violated by more than 20 years. For ephemeral session data with a shelf life of minutes, you are fine. Most production systems sit somewhere in between, and the middle is where HNDL risk lives.

For the full framework, including realistic numbers for each parameter, worked examples across four industries, and what actually happens when you try to re-encrypt legacy data, read our deep dive: Mosca's Inequality: You're Probably Already Too Late on Post-Quantum Migration. The full technical white paper is available as a download from that post.

The data shelf-life self-check

Your X comes from the data itself, not from your infrastructure. Before writing any migration plan, run this check for every data flow your team owns:

Data type | Typical shelf life (X) | HNDL-critical? |

Session cookies, CSRF tokens | Minutes to hours | Low |

OAuth refresh tokens | Days to weeks | Low to Medium |

Application logs, metrics | Months | Medium |

Customer PII | 7+ years (GDPR-dependent) | High |

Financial transaction records | 7 to 10 years | High |

Health records | 20 to 30+ years | Critical |

Source code, IP, trade secrets | Indefinite | Critical |

Long-lived authentication credentials | Indefinite | Critical |

Anything in the High or Critical rows that currently travels over TLS protected by classical key exchange, or is encrypted at rest with RSA-wrapped keys, is sitting on the HNDL clock.

What HNDL looks like in your code

Security teams talk about HNDL in the abstract. Developers ship it in specific lines of code. Two representative patterns:

Field-level encryption of sensitive user data

python

from cryptography.hazmat.primitives.asymmetric import padding

from cryptography.hazmat.primitives import hashes

encrypted_ssn = public_key.encrypt(

ssn.encode("utf-8"),

padding.OAEP(

mgf=padding.MGF1(algorithm=hashes.SHA256()),

algorithm=hashes.SHA256(),

label=None,

),

)

db.store(user_id, encrypted_ssn)Every row you write to that table sits in live storage, replicas, nightly backups, analytics exports, and forgotten dev environment dumps for years. An HNDL collector who siphons a database snapshot today has your users' social security numbers, medical identifiers, or account numbers lined up for batch decryption on Q-Day. The ciphertext is safe now. It will not be safe later.

JWT signatures with RSA

javascript

const token = jwt.sign(payload, privateKey, { algorithm: 'RS256' });Every token you issue with RS256, RS384, RS512, or ES256 is a verifiable claim signed with a quantum-vulnerable key. The attack model here is slightly different: it is not "read the plaintext", it is "forge a historical token that appears legitimate". Once the signing key is recoverable, an adversary can produce a token dated to 2024, signed with the real key of the time, indistinguishable from one you actually issued.

The same shape shows up in KMS-wrapped backups, client-side file uploads that wrap a data key with RSA, long-lived service-to-service TLS, and signed artefacts (Git commits, container images, release packages). The ciphertext outlives the algorithm.

But can't I just re-encrypt this data later?

This is the first question most developers ask once they see HNDL in their own code, and the honest answer is "partially." Data you still control (production databases, backups in your cloud accounts) can be decrypted with the old algorithm and re-encrypted with the new one. Data that has already left your network (TLS sessions harvested in transit, leaked backups, forgotten dev dumps) cannot. For a typical SaaS company, re-encryption covers maybe 60 to 80 percent of the risk surface.

The white paper linked above walks through the complete re-encryption decision table: where the ciphertext lives, whether it's re-encryptable, and what the operational costs actually look like.

What's safe, what's broken, and what's weakened

Not all cryptography fails the same way against quantum attacks.

Broken, replace these. RSA, ECDSA, ECDH, classical Diffie-Hellman, and DSA all fall to Shor's algorithm. There is no safe key size for these algorithms against a CRQC. Migrate to NIST's standardised replacements: FIPS 203 (ML-KEM) for key encapsulation, FIPS 204 (ML-DSA) for digital signatures, FIPS 205 (SLH-DSA) as a stateless hash-based signature backup.

Weakened, size matters. Symmetric algorithms and hash functions are hurt by Grover's algorithm, which gives roughly a square-root speedup on brute-force search. AES-128 offers roughly 64-bit effective security against a quantum attacker, not safe for long-shelf-life data. AES-256 offers roughly 128-bit effective security, still considered safe. SHA-256 remains acceptable for most uses; SHA-384 and above is preferable for new long-lived designs.

Safe, keep using. AES-256 in authenticated modes (GCM, GCM-SIV), SHA-384 and SHA-512, HMAC with SHA-384 or larger, and the three NIST PQC standards above.

A nuance developers often miss: upgrading from RSA to ML-KEM solves the key-exchange problem, but if you still use AES-128 for bulk encryption, your pipeline is only as strong as its weakest link. Both ends of the cryptographic stack need attention.

What should developers do now?

1. Audit what you have. Inventory every cryptographic primitive in your application source code, dependencies, infrastructure configuration, TLS endpoints, and signing pipelines. Focus on three questions: which algorithms, what key sizes, and what data they protect. The output is a Cryptographic Bill of Materials (CBOM), standardised by CycloneDX 1.6.

2. Prioritise by data shelf life, not code volume. The five lines of code that sign long-lived tokens matter more than the five thousand lines handling session state. Your Mosca math tells you which systems are already past their migration deadline.

3. Build crypto-agility into every new design. Abstract cryptographic operations behind an interface so the underlying algorithm can be swapped without refactoring the caller. Every hard-coded algorithm name in your codebase is future technical debt.

4. Pilot hybrid modes before forklift migration. Hybrid TLS (X25519 combined with ML-KEM) and hybrid signatures are supported in major libraries. Running classical and post-quantum algorithms in parallel lets you validate performance and compatibility before making a breaking change.

5. Start with the easy wins. JWT algorithm selection, TLS cipher suite ordering, and new certificate issuance are low-risk places to begin. HSM signing keys, long-lived root certificates, and protocol-level dependencies come later.

The tool landscape

IBM Quantum Safe is the mature option on the software side. Quantum Safe Explorer performs deep static analysis across application source code and generates a CBOM per repository, with a VSCode extension available as of December 2025. Strong enterprise and mainframe coverage. Delivered through IBM Consulting, which means long engagements and enterprise budgets.

QuSecure QuProtect is the visible option on the network side. TLS and network-layer PQC remediation, integration with routing hardware, free Reconnaissance module for network-level crypto discovery.

Both are strong at what they do. Neither is built for the engineer who wants to scan a codebase in an afternoon without a procurement cycle.

Zerberus PQC is the platform we are currently building: a developer-first PQC readiness product for engineering teams. It produces a CycloneDX 1.6 CBOM from your application source code, scores quantum exposure against NIST quantum security levels, and runs offline inside your existing workflow. No enterprise contract required. We are opening it up to a small group of pilot customers right now.

FAQ

Is HNDL a real threat or just theoretical? It is treated as an active threat by every major Western cybersecurity agency, including the UK NCSC, US CISA, European ENISA, and Australian ACSC. Whether a specific adversary has harvested a specific piece of your traffic is impossible to verify from the defender's side, and that is exactly the problem. The defensive posture has to assume yes.

When will quantum computers actually break RSA? Credible estimates range from 2030 to beyond 2040. The uncertainty cuts both ways: a breakthrough could compress the timeline, and a fundamental barrier could extend it. Planning against the optimistic end is the only safe approach for long-shelf-life data.

Do I need to migrate right now? If you ship anything with a shelf life measured in years, you need to start the discovery and planning work in 2026. The CNSA 2.0 deadline for US national security systems is January 2027. The EU Cyber Resilience Act takes full effect in December 2027. These deadlines are legally binding for vendors in those supply chains and are increasingly appearing in enterprise procurement questionnaires.

2026 is the year to get visibility

The migration window is narrower than it looks. CNSA 2.0 makes post-quantum compliance mandatory for new US national security system procurements in January 2027. The EU Cyber Resilience Act imposes cryptographic requirements on all digital products sold in the EU from December 2027. Cyber insurance underwriters have started asking about cryptographic inventories in renewal questionnaires.

None of this requires a finished migration by 2027. It does require visibility: a credible cryptographic inventory, a migration roadmap, and evidence that the organisation is moving. Cryptographic discovery is the cheapest, fastest, and lowest-risk step in the whole journey. You can get meaningful visibility into your codebase in an afternoon. Everything after that is easier once you know what you have.

Zerberus PQC is currently open to pilot customers only. If you are starting your PQC readiness journey and want early access or want to compare notes with a team building in this space, reach out to us at leads@zerberus.ai.

Comments